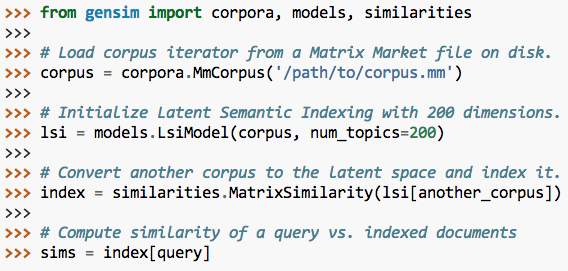

The data set I used is the 20Newsgroup data set. To learn more about LDA please check out this link. Those topics then generate words based on their probability distribution. It also assumes documents are produced from a mixture of topics.Therefore choosing the right corpus of data is crucial. LDA assumes that the every chunk of text we feed into it will contain words that are somehow related.Each document is modeled as a multinomial distribution of topics and each topic is modeled as a multinomial distribution of words.It builds a topic per document model and words per topic model, modeled as Dirichlet distributions. LDA is used to classify text in a document to a particular topic. The code is quite simply and fast to run. In this data set I knew the main news topics before hand and could verify that LDA was correctly identifying them. I tested the algorithm on 20 Newsgroup data set which has thousands of news articles from many sections of a news report. Topic Modelling is the task of using unsupervised learning to extract the main topics (represented as a set of words) that occur in a collection of documents. Note that this approach makes LSI a hard (not hard as in difficult, but hard as in only 1 topic per document) topic assignment approach.I recently started learning about Latent Dirichlet Allocation (LDA) for topic modelling and was amazed at how powerful it can be and at the same time quick to run. We may then get the predicted labels out for topic assignment. When we use k-means, we supply the number of k as the number of topics. Graph minors IV Widths of trees and well quasi ordering The intersection graph of paths in trees The generation of random binary unordered trees Relation of user perceived response time to error measurement System and human system engineering testing of EPS The EPS user interface management system A survey of user opinion of computer system response time Human machine interface for lab abc computer applications split ()) texts = for document in documents ] # remove words that appear only once frequency = defaultdict ( int ) for text in texts : for token in text : frequency += 1 texts = > 1 ] for text in texts ] dictionary = corpora. use ( 'seaborn' ) documents = # remove common words and tokenize stoplist = set ( 'for a of the and to in'. Import matplotlib.pyplot as plt from collections import defaultdict from gensim import corpora plt. Dynamic Bayesian Networks, Hidden Markov Models Differential Diagnosis of COVID-19 with Bayesian Belief Networks Recurrent Neural Network (RNN), Classification Min-Max Scaling with Adjustments To Negatives Stochastic Gradient Descent for Online Learning Iteratively Reweighted Least Squares Regression Safe and Strong Screening for Generalized LASSO Estimating Standard Error and Significance of Regression Coefficients Data Discretization and Gaussian Mixture Models Iterative Proportional Fitting, Higher Dimensions Precision-Recall and Receiver Operating Characteristic Curves Conditional Mutual Information for Gaussian Variables Mutual Information for Gaussian Variables Conditional Multivariate Gaussian, In Depth Conditional Multivariate Normal Distribution

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed